Multi-Agent AI Architecture for Healthcare Contact Centers in the GCC

Editor’s note: At WHX Dubai 2026, Hadeel Abu Baker presented ScienceSoft’s architecture for an AI-first healthcare contact center. In this article, she expands on her conference talk by explaining the operating logic behind the design and detailing architectural decisions, integrations, safety controls, and rollout options. If you’re considering deploying AI for patient access, you can consult Hadeel or other ScienceSoft experts in AI for healthcare.

One Patient Call, Many Tasks

When I discuss patient access with healthcare leaders, I often start by asking them to imagine a regular patient call to the front desk. A patient wants to reschedule the appointment, keep the same doctor, receive directions via message, and get a reminder before the visit. Then they recall another commitment and change the preferred slot in the middle of the conversation.

If the front desk staff answered the call, they would likely perceive it as a single request. But if you put an AI assistant in their place, it would have to run multiple coordinated operations across scheduling, patient records, messaging, and follow-up systems to fulfill the request. This is where simple automation starts to fail, and this is why an AI-first contact center needs a governed operating model, not a stand-alone assistant.

The architecture I’ve presented is a strong fit for Gulf health systems that are under pressure to improve patient access and make better use of limited appointment capacity. In Qatar, for example, Hamad Medical Corporation encourages patients who cannot attend a specialist appointment to cancel or reschedule it early through its 24/7, multilingual Nesma’ak Patient Contact Center. HMC notes that missed appointments lengthen wait times for other patients. It shows why AI-supported contact center models are becoming so relevant in the region.

Architecture: One Conversation, Many Specialists

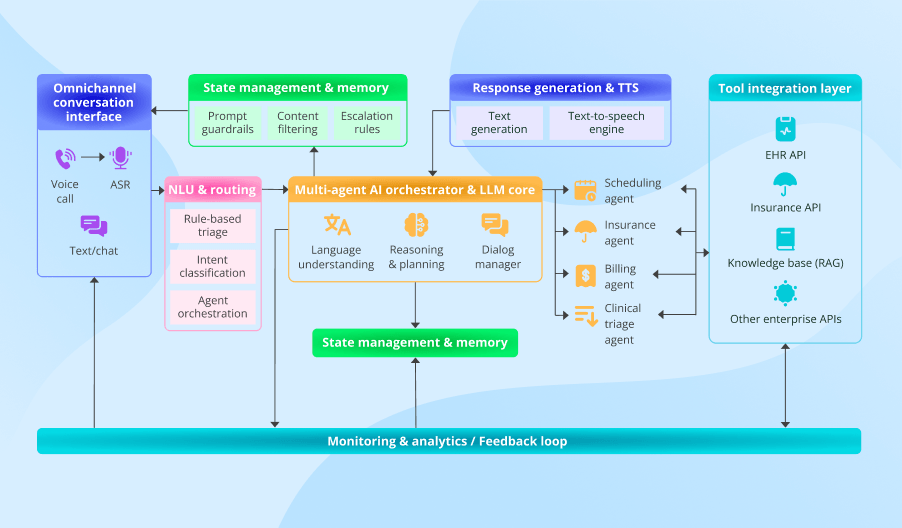

The architecture below is the model I recommend for patient access because it turns complex requests into separate, controlled actions across the respective systems. It gives the contact center one operating layer for identity, scheduling, coverage, and follow-up, with escalation built into the flow.

The orchestrator is the central component of this system. It keeps the conversation context and identifies the caller’s intent. Then it checks whether it can handle the request and passes it to the appropriate single-purpose agent (e.g., a scheduling or a billing tool) or to a human, without forcing the caller to repeat details. With this architecture, the same interaction can move from identity checks to rescheduling, then to directions, and finally to reminder preferences, while the patient still experiences it as a single continuous exchange.

The single-purpose agents matter for a practical reason. Each one handles a fixed type of task, follows the explicit rules for that task, and can access only the systems it needs for it. That makes the architecture easier to test, update, and govern, since you can add or replace an agent without overhauling the entire system. In addition, the finite scope of tasks prevents AI from “improvising” during the call, since at each point, it is limited to a specific action path we defined in advance.

Why Specialists and Not a Super-Agent

A simple automation tool works well until the request stops being simple. One tool can change a slot, and another can check insurance. But once the same request needs both actions, the flow splits. Staff then have to recover context and finish the work across several systems.

A single super-agent that can handle several tasks at once seems like the obvious fix, but it creates a different risk. The same model now has to manage identity, scheduling, coverage, directions, reminders, and escalation logic at once. A change in one workflow can weaken the rest, and a single model with too many access rights is harder to control. That is why our architecture keeps one orchestrator at the center and delegates execution to narrow agents with clear boundaries. Neither the orchestrator nor the agents have full access to the entire system’s functions, which results in coordinated task execution instead of unpredictable behavior of one oversized decision-maker.

But task coordination alone does not guarantee the system will work well. Each specialist agent still needs access to the right systems, the right write-backs, and the right fallback path when automation breaks or human input is needed.

What Must Be Integrated

Integration is where most of the operational complexity, cost, and delivery risk become visible. Each patient request has to reach the system responsible for the next step. It also has to return confirmed updates and follow-up tasks to the right staff software. Single-purpose agents connect to those systems through APIs.

- The patient record system supports identity checks and provides visit context, patient demographics, and appointment details already stored in the record.

- The scheduling system enables slot search, booking changes, and reminder settings.

- The billing and coverage system supports eligibility checks and payment answers.

- The contact center workspace receives call summaries, escalated tasks, and follow-up context.

- Approved knowledge sources provide branch directions, visit instructions, reminder content, and policy-backed answers to common questions.

- Messaging and location tools deliver confirmations, branch guidance, route details, and post-call follow-ups.

Updates received during the calls also need to be moved back into those systems. When the agent confirms a new slot or a reminder preference, it writes that result back to the source system. When automation cannot finish the task safely, the system creates a staff task with the confirmed context already attached. Staff should not have to reconstruct the request, the prior steps, or the missing decision from the start.

Where the Agent Must Stop

It is essential to define the line that the agent must not cross. That line appears in three situations:

In short, whenever uncertainty arises, the system triggers escalation. The agent should never guess. It should stop the automated workflow, create a structured handoff summary (containing the request, completed steps, pending work, and the reason for escalation), and pass the case to a human.

That summary matters as much as the stop itself. Contact center staff, front-desk teams, and nurses need to see what the agent confirmed, what it could not confirm, and what still requires human judgment, without having to call the patient and start the conversation from the beginning. The system should also maintain an audit record of identity checks, data access, system actions, and handoffs.

Built for Gulf Patient Access

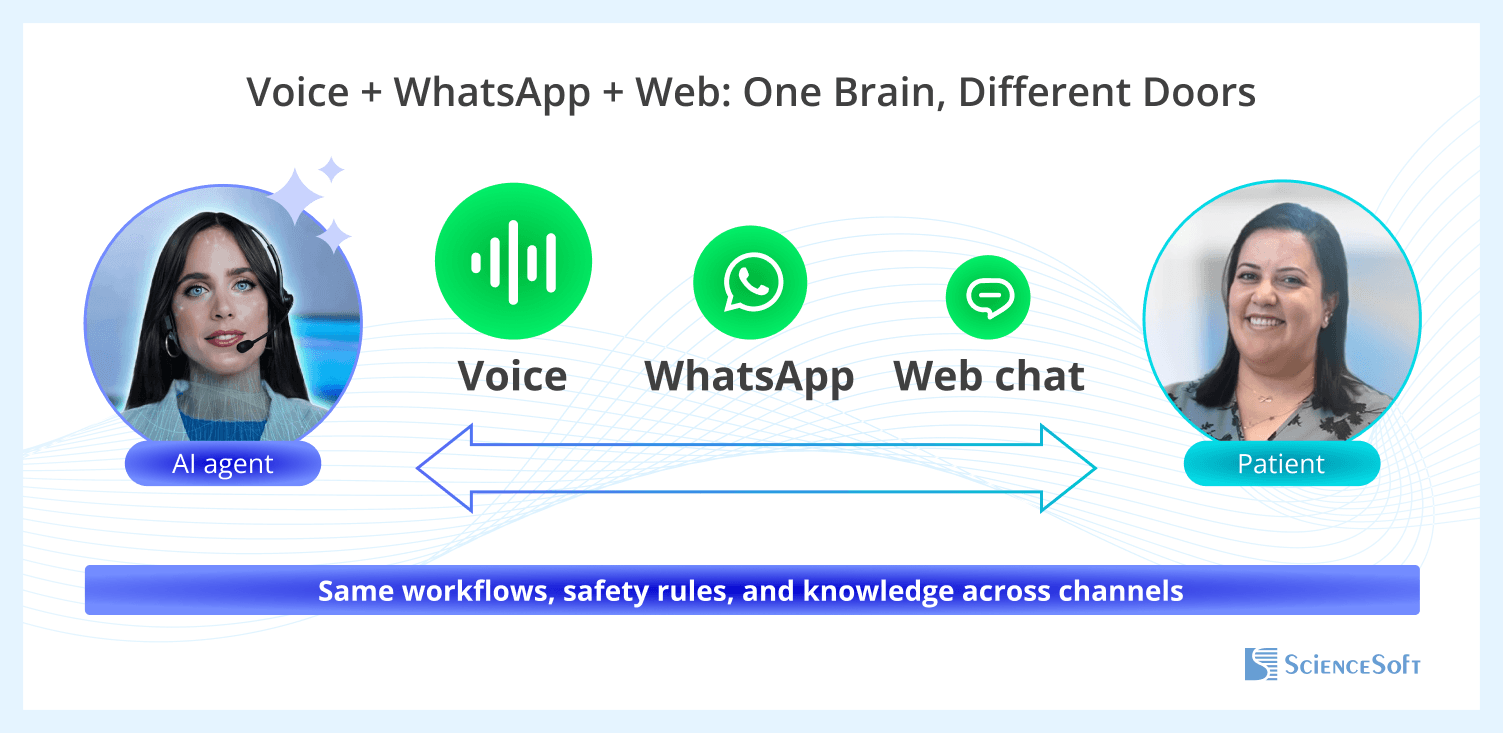

For Gulf providers, regional fit is not an afterthought — it changes the architecture from the start. Many access journeys in the region start in voice, move between Gulf Arabic and English, and end in WhatsApp messages. Because code-switching is common, the orchestrator identifies language first and preserves that context across channels, with the same safety rules and approved content.

ScienceSoft calls this approach One Brain, Different Doors because it preserves continuity across channels. The patient request moves from call to message without a restart, a second verification, or a new logic path.

This architecture is a strong fit for the GCC also because patient access there depends on a wider mix of branch-specific instructions and insurer-specific coverage logic. Narrow agents make that variation easier to manage, because local updates can be made inside the relevant workflow instead of forcing changes across one large agent.

How to Roll It Out Safely

Some providers plan an AI contact center rollout around a growing share of covered calls and early savings. Yet a stronger starting point is proving that the system behaves reliably, stays inside defined boundaries, and hands cases to staff cleanly when automation stops.

ScienceSoft’s architecture makes it easy, as it divides patient access into narrow workflows, each of which can be launched, tested, and corrected without disturbing the rest of the model. Those workflow boundaries make rollout phased, predictable, and safe.

The first pilot works best when it covers only a few low-risk workflows with stable source systems, such as appointment changes, directions, and reminders. These workflows have a clear outcome, limited system dependencies, and little need for clinical judgment.

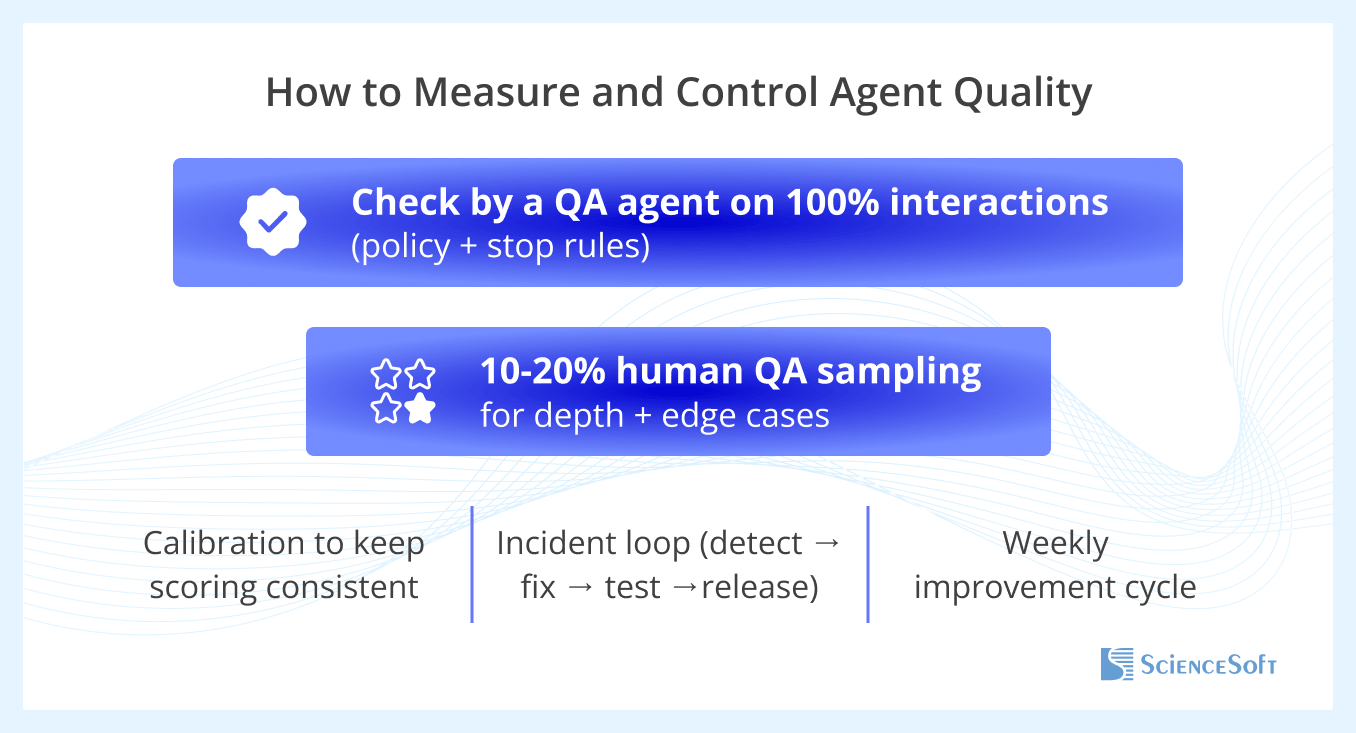

To test whether they run predictably, ScienceSoft recommends a two-layer QA model.

- Automated QA reviews all interactions against fixed controls, such as task routing accuracy, stop-rule compliance, handoff summary completeness, and correct system updates.

- Human review then focuses on hard cases, especially failed identity checks, unclear speech, workflow transition decisions, and escalation quality.

When either review layer finds a recurring issue, the IT team can easily trace it to its roots (e.g., stop rules, agent instructions, approved content, or integration logic) within pilot specialist workflows. Then every affected workflow gets corrected, retested, and released separately without touching the rest of the software.

The further rollout steps also aim to test the next workflow reliability rather than cover more calls. Readiness for each next step becomes visible through a short scorecard for the current workflow. The practical example can include:

- Safe escalation

- Failed identity checks

- Summary accuracy

- Handoff time

- Wrong integrated system updates

- Repeat calls about the same issue

- Gaps in approved content

If those measures stay within target across several consecutive review cycles, the next workflow can be added to the pilot with low operational risk.

As for an early business value, with such a rollout model, it appears first in reliable execution, lower rework, and cleaner handoffs within the pilot workflow. That operational proof creates the basis for expanding automation of patient access requests, reducing staff workload, and lowering operating costs with less delivery risk.

A dedicated session with ScienceSoft’s healthcare AI experts yields a practical roadmap covering priority use cases, GCC compliance checkpoints, integration steps, and budget-time estimates for Arabic-capable AI for patient engagement, clinical decision support, or clinical operations.

Book a practical expert session